What if your workflows could process tens of thousands of files in parallel, never missing a beat? For many, scaling n8n workflows to handle such massive workloads feels like navigating a minefield of challenges—memory overload, rate limits, and error propagation, to name a few. But here’s the truth: with the right strategies, you can transform your workflows into a powerhouse of efficiency and reliability. Imagine a system so robust it not only tackles large-scale data ingestion but thrives under the pressure. That’s the promise of this walkthrough, and we’re here to show you how to make it happen.

In this guide, created by the experts at The AI Automators, you’ll uncover actionable techniques to infinitely scale your n8n RAG workflows. From designing orchestrator workflows that distribute tasks seamlessly to optimizing infrastructure for high-volume processing, every step is designed to help you overcome the hurdles of scaling. Along the way, you’ll learn how to implement batching, error-handling layers, and concurrency strategies that ensure your workflows remain efficient and resilient, even as your data demands grow. Let’s explore what it takes to build workflows that don’t just scale but redefine what’s possible.

Scaling n8n Workflows Efficiently

TL;DR Key Takeaways :

- Key Challenges in Scaling: Issues like memory overload, database constraints, and external service rate limits can hinder workflow performance and reliability.

- Orchestrator Workflow Design: Strategies such as batch processing, webhook triggers, queue systems, and dedicated error-handling workflows are essential for scalability and reliability.

- Optimization Strategies: Techniques like binary data separation, database optimization, batching, filtering, and using concurrency improve workflow efficiency and prevent system crashes.

- Lessons Learned: Effective scaling requires execution data management, robust error handling, data segmentation, resource monitoring, and continuous infrastructure tuning.

- Infrastructure Considerations: Use SFTP servers, optimize environments like Supabase and n8n, and design modular workflows to ensure scalability and high performance.

Key Challenges in Scaling Workflows

Scaling workflows involves overcoming several critical obstacles that can affect efficiency and reliability. These challenges include:

- Memory Overload: Processing large volumes of data within a single workflow can exhaust server resources, leading to crashes and reduced performance.

- Database and Service Limits: Many external services, such as OpenAI or Supabase, impose rate limits that can create bottlenecks and slow down processing.

- Error Propagation: Unhandled errors or edge cases can disrupt workflows, causing unexpected interruptions and delays.

- Single-Workflow Inefficiencies: Consolidating all tasks into one workflow can lead to slower processing times and reduced scalability.

Addressing these challenges requires a thoughtful approach to workflow design, making sure that tasks are distributed efficiently and systems are optimized for high-volume operations.

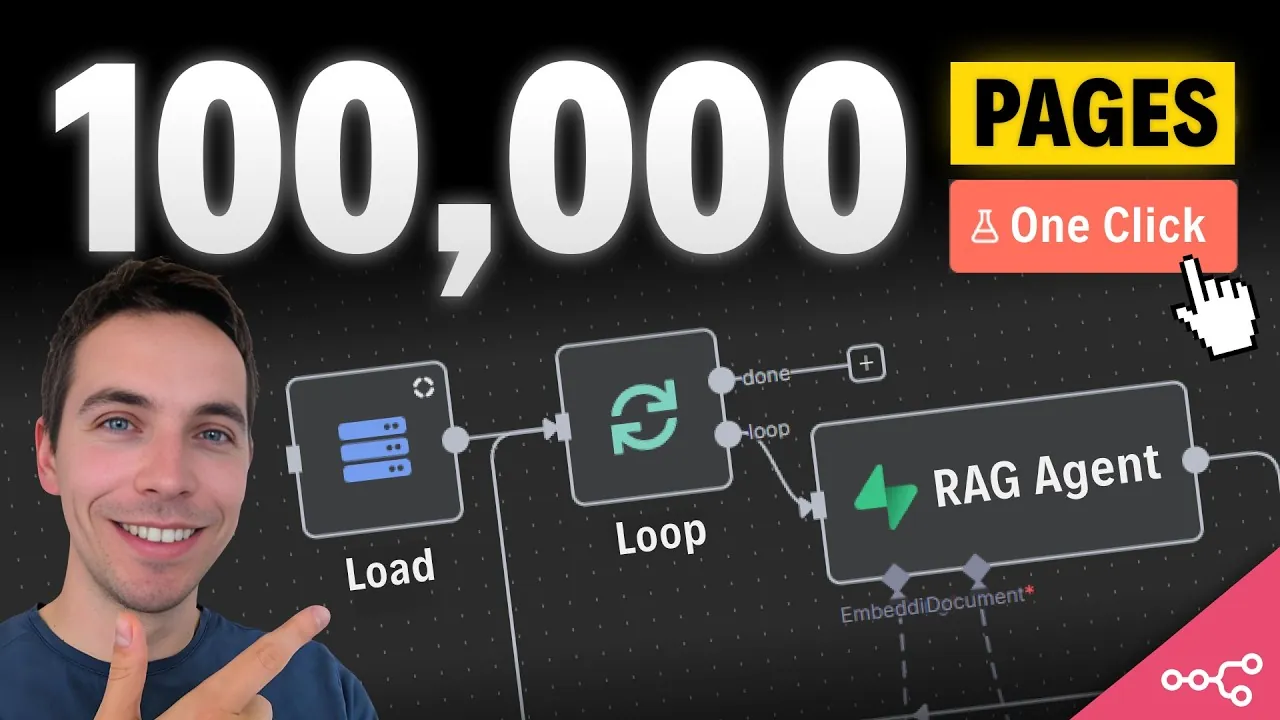

Designing an Orchestrator Workflow

An orchestrator workflow is essential for distributing tasks effectively and making sure reliability at scale. To build one, consider the following strategies:

- Batch Processing: Divide files into smaller, manageable batches, such as 50 files per batch, to enable parallel processing and reduce strain on resources.

- Webhook Triggers: Replace sub-executions with webhook triggers to minimize resource consumption and improve system performance.

- Queue System: Implement a queue to monitor task execution, automatically retry failed tasks, and maintain workflow continuity.

- Error Handling: Create a dedicated workflow for managing errors, logging issues, and retrying incomplete executions to ensure reliability.

By implementing these techniques, you can design workflows that are both scalable and resilient, capable of handling large-scale tasks without compromising performance.

How to Infinitely Scale Your n8n Workflows

Uncover more insights about n8n workflows in previous articles we have written.

- Easily Copy and Create n8n Workflow with Claude AI

- Advanced n8n Workflow Tips to Save Time and Boost Efficiency

- How to Use n8n and OpenRouter for AI Automation Workflows

- Convert n8n AI Automation Workflows into Web Apps (No Code

- How to Avoid Costly Mistakes When Building AI Workflows with n8n

- 25 Essential n8n Hacks to Streamline Workflow Automation

- How to Automate Social Media with n8n Automations

- How to Connect Slack to n8n for Workflow Automation

- Build AI Automation Agents in Minutes Without Coding Using n8n

- How to Build Custom AI Agents to Automate Your Workflow

Optimization Strategies for Workflow Efficiency

Optimizing workflows is critical to managing large-scale data processing while maintaining high performance. Here are some effective strategies:

- Binary Data Handling: Separate workflows for binary data processing to reduce memory usage and prevent system crashes.

- Database Optimization: Use tools like Supabase to manage parent-child execution tables, making sure data integrity and scalability.

- Batching and Filtering: Group files into batches and filter out unnecessary data to streamline operations and improve efficiency.

- Concurrency: Use n8n’s queue mode to distribute workloads across multiple workers, significantly increasing throughput.

These strategies help ensure that workflows remain efficient and capable of handling increasing data volumes without sacrificing reliability or speed.

Lessons Learned from Scaling Workflows

Scaling workflows is an iterative process that requires continuous learning and refinement. Key lessons from scaling efforts include:

- Execution Data Management: Disable saving execution data for successful workflows to prevent database bloat and improve performance.

- Error Handling Layers: Implement multiple error-handling mechanisms, such as retry workflows and catch-all processes, to address failures effectively.

- Data Segmentation: Avoid overloading workflows with excessive data; instead, process files individually or in smaller groups when feasible.

- Resource Monitoring: Regularly monitor and scale Supabase compute and storage resources to meet growing demands and avoid performance bottlenecks.

- Infrastructure Tuning: Continuously fine-tune workflows and infrastructure to adapt to increasing scale and evolving requirements.

These lessons highlight the importance of proactive monitoring and iterative improvements to maintain workflow efficiency and scalability.

Performance Insights

Optimized workflows can deliver significant improvements in speed and efficiency, allowing the processing of thousands of files per hour. Key performance insights include:

- Benchmarking: Regularly track workflow performance to identify bottlenecks and implement targeted optimizations.

- External Resource Utilization: Optimize workflows to maximize the efficiency of external services, reducing processing time and improving overall performance.

By focusing on these performance metrics, you can ensure that workflows operate at peak efficiency, even as data volumes increase.

Infrastructure Considerations for Scaling

A robust infrastructure is essential for scaling workflows effectively. To build a scalable system, consider the following recommendations:

- SFTP Servers: Use SFTP servers for file storage instead of slower APIs like Google Drive to ensure faster access and processing times.

- Environment Configuration: Optimize Supabase and n8n environments for high-volume data processing, making sure reliability and performance.

- Workflow Modularity: Design modular workflows that can be easily scaled and maintained, simplifying the management of complex processes.

These infrastructure considerations provide the foundation for building workflows that can handle large-scale data processing with ease and reliability.

By addressing common challenges, implementing efficient orchestrator workflows, and optimizing both infrastructure and performance, you can scale your n8n workflows to meet the demands of high-volume data processing. With the right strategies in place, your workflows will achieve unparalleled efficiency, reliability, and scalability.

Media Credit: The AI Automators

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.