Efficient token management is a cornerstone of working effectively with Claude, as every interaction, whether prompts, responses, or conversation history, adds to the total token count. Below Nate Herk highlights how unchecked token usage can lead to unnecessary costs and diminished performance, especially during extended sessions or complex workflows. For example, using the /clear command to reset the context for unrelated tasks is a simple yet impactful way to prevent token accumulation. By adopting structured approaches like this, users can maintain cost-effectiveness without sacrificing the quality of their outputs.

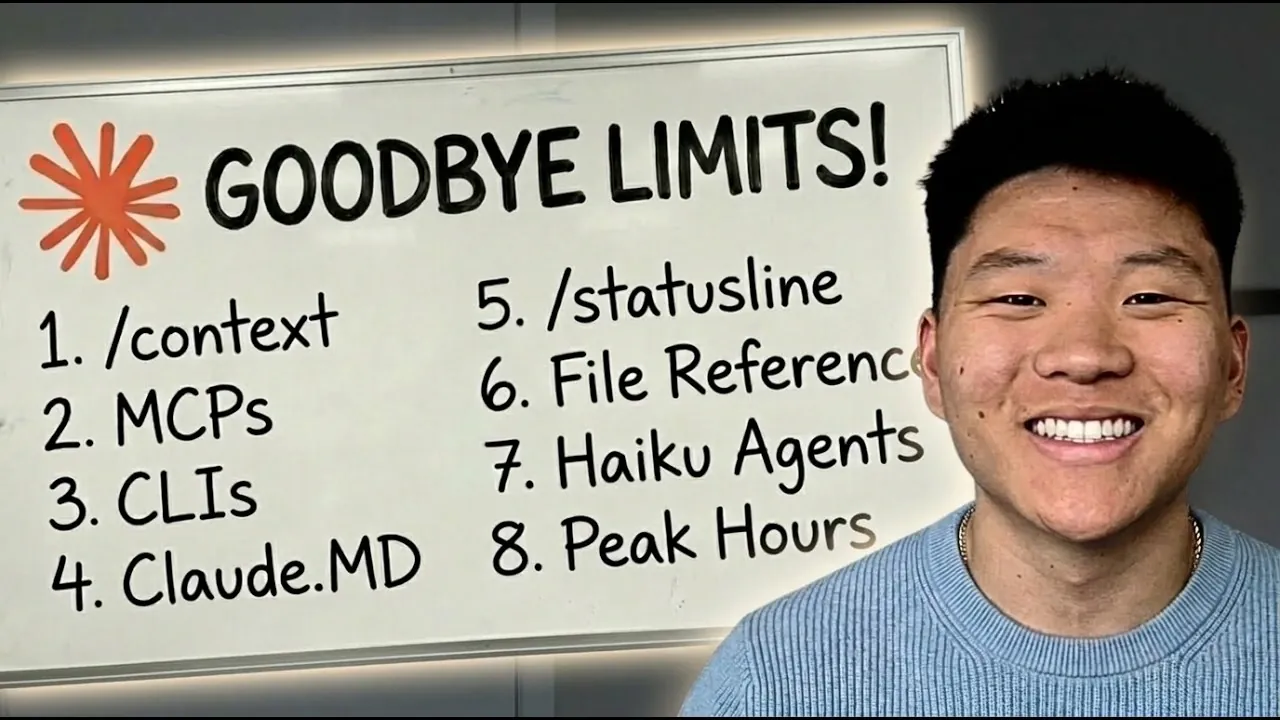

In this overview, you’ll gain insight into 18 practical strategies to optimize token usage, from foundational techniques like batching prompts and monitoring token consumption in real time to advanced methods such as refining context and selecting the right Claude model for specific tasks. Explore how to streamline workflows by managing conversation history, condensing context and scheduling tasks efficiently. These actionable tips are designed to help you reduce overhead, extend session longevity and ensure smoother operations with Claude.

Understanding Tokens & Their Importance

TL;DR Key Takeaways :

- Efficient token management is crucial for reducing costs and maintaining productivity when using Claude, as excessive token usage can degrade performance.

- Basic strategies like starting fresh conversations, batching prompts and monitoring token usage can immediately optimize workflows and minimize overhead.

- Intermediate techniques include streamlining files, condensing context and limiting unnecessary outputs to refine token consumption further.

- Advanced methods such as selecting the appropriate Claude model, optimizing session timing and using sub-agents sparingly help maximize efficiency and cost-effectiveness.

- Adopting long-term best practices like maintaining context hygiene, balancing quality and cost and strategically planning workflows ensures sustained efficiency and high-quality outputs.

Claude processes all text, prompts, responses and conversation history, in the form of tokens. Each interaction contributes to the total token count and this compounding effect can escalate costs and degrade performance if not managed effectively. Inefficient practices, such as retaining irrelevant conversation history or failing to monitor token usage, exacerbate these challenges. A structured approach to token optimization is essential to maintain cost-effectiveness and ensure smooth operations.

Tier 1 Hacks: Foundational Strategies

Begin with these basic yet effective strategies to immediately reduce token consumption and improve workflow efficiency:

- Start Fresh Conversations: Use the

/clearcommand to reset the context for unrelated tasks, preventing unnecessary history rereads and token accumulation. - Disconnect Unused Servers: Disconnect inactive MCP servers to eliminate invisible token overhead that can inflate costs.

- Batch Prompts: Combine multiple prompts into a single message to minimize token usage and streamline interactions.

- Plan Mode: Use “plan mode” to outline tasks in advance, reducing redundant token consumption during execution.

- Monitor Tokens: Track token usage with the

/contextand/costcommands to stay informed and adjust workflows as needed. - Real-Time Tracking: Set up a status line to monitor token usage in real time, making sure you stay within budget and avoid unnecessary overhead.

- Selective Pasting: Avoid pasting irrelevant or excessive content into Claude, as this can increase processing costs and reduce efficiency.

- Active Monitoring: Regularly check Claude’s progress to ensure tokens are not wasted on irrelevant or low-priority outputs.

Gain further expertise in Claude by checking out these recommendations.

- NotebookLM Update Adds Claude Integration for Workflows

- Why Claude Mythos is Setting a New Benchmark for AI

- Claude Mythos Delayed: Inside Anthropic’s Decision

- A Quick Guide to Claude Mythos & the Latest AI Releases

- Claude Code 2 Adds Multi-Agent Code Review for Team & Enterprise

- Claude Code 2 Feature Update: Automation, Workspace Links, and Skill Scoring

- Claude Sonnet 5 vs Gemini 3 : Expected Strengths & Costs Compared

- Claude Sonnet 5 Leak Shows 1M Tokens and Faster TPU Training

- Anthropic Claude Dispatch vs OpenClaw : Security & Costs

- Claude Sonnet 5 Pricing, Specs, and Performance Versus Rivals

Tier 2 Hacks: Intermediate Strategies for Refinement

For users looking to refine their workflows further, these intermediate strategies focus on context management and file optimization:

- Streamline Files: Keep the

cloud.mdfile concise and focused on essential information to avoid unnecessary exploration and token usage. - Specific References: Be precise when referencing files or data to limit token consumption and improve response accuracy.

- Compact Context: Manually condense the context when it reaches 60% capacity to maintain quality and prevent token bloat.

- Minimize Breaks: Avoid pauses longer than five minutes during sessions, as they can trigger full context reprocessing, increasing token usage unnecessarily.

- Limit Outputs: Restrict command outputs to only what is necessary, preventing excessive token consumption on irrelevant details.

Tier 3 Hacks: Advanced Techniques for Expert Users

For those seeking to maximize efficiency, these advanced techniques focus on optimizing model selection, sub-agent usage and session timing:

- Model Selection: Choose the appropriate Claude model, Sonnet, Haiku, or Opus, based on the complexity of your task to balance cost and performance effectively.

- Sub-Agent Usage: Use sub-agents sparingly, as they consume significantly more tokens compared to direct interactions with Claude.

- Task Scheduling: Schedule resource-intensive tasks during off-peak hours to maximize session efficiency and reduce token costs.

- File Optimization: Regularly optimize the

cloud.mdfile to serve as a reliable source of truth for decisions and progress summaries, minimizing redundant token usage.

General Best Practices for Long-Term Efficiency

To ensure sustained efficiency and cost-effectiveness, adopt these overarching principles in your workflows:

- Balance Quality and Cost: Strive to optimize token usage without compromising the quality of outputs, making sure a balance between efficiency and effectiveness.

- Context Hygiene: Regularly clean up and maintain a relevant context to avoid unnecessary token consumption and improve response accuracy.

- Strategic Timing: Plan workflows and sessions efficiently, aligning tasks with optimal times to maximize productivity and minimize costs.

By understanding how tokens are consumed and applying these 18 hacks, you can significantly reduce costs, extend session longevity and improve overall workflow efficiency. Whether you are managing conversation history, selecting the right model, or monitoring tokens in real time, these practices will help you fully use Claude’s capabilities while maintaining high-quality outputs.

Media Credit: Nate Herk | AI Automation

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.