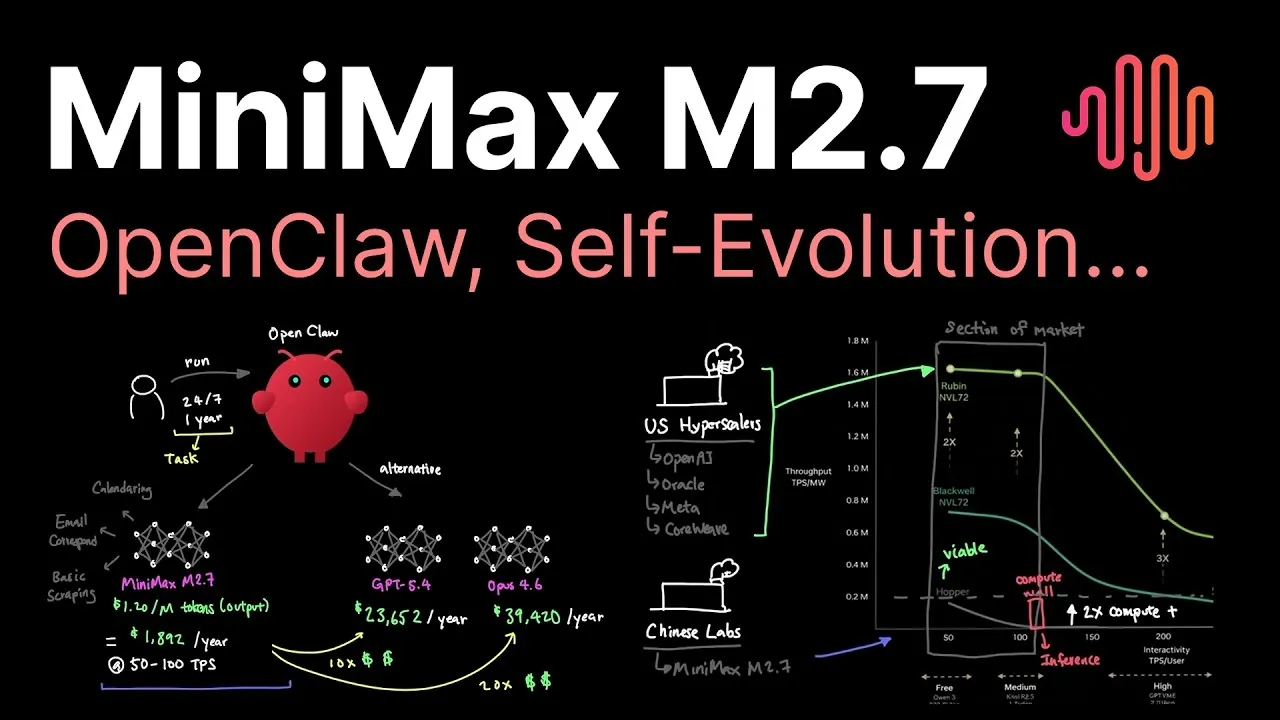

MiniMax M2.7 offers a balanced approach to artificial intelligence, designed to meet the needs of organizations constrained by computational resources. With 230 billion parameters and a processing speed of 50-100 tokens per second, it provides a practical solution for tasks that require both precision and efficiency. Caleb Writes Code explores how this mid-tier model stands out by addressing compute limitations through features like a 200,000-token context window and compatibility with older hardware architectures. These characteristics make MiniMax M2.7 particularly effective for agentic applications, such as OpenClaw and privacy-sensitive deployments.

In this guide, you’ll gain insight into how MiniMax M2.7 compares to both cost-efficient and premium AI models, including its role in bridging global disparities in compute access. Discover its impact on workflow automation, from reducing manual effort by up to 50% to accelerating model release cycles. You’ll also explore the trade-offs of its full attention mechanism and how it competes with hybrid architectures in terms of resource optimization. This breakdown provides a clear view of MiniMax M2.7’s capabilities and its relevance in today’s evolving AI landscape.

What is MiniMax M2.7?

TL;DR Key Takeaways :

- MiniMax M2.7 is a mid-tier AI model with 230 billion parameters, designed for cost-efficient performance in compute-constrained environments, with an annual operational cost of approximately $2,000.

- It supports privacy-sensitive applications and local deployment, offering a 200,000-token context window for handling complex workflows and agentic tasks like OpenClaw.

- The model enhances automation in machine learning workflows, reducing manual effort by 30-50% and allowing faster model release cycles and streamlined processes.

- MiniMax M2.7 addresses global disparities in compute availability by using older GPU architectures, making it accessible to markets with limited hardware resources.

- The AI market is bifurcating between cost-efficient models like MiniMax M2.7 and premium frontier models, with MiniMax playing a critical role in providing widespread access to AI access for resource-constrained organizations.

MiniMax M2.7 is a mid-tier AI model boasting 230 billion parameters, optimized for tasks that require moderate computational resources. It achieves a processing speed of 50-100 tokens per second and supports local deployment, making it particularly suitable for privacy-sensitive applications. With an annual operational cost of approximately $2,000, MiniMax M2.7 provides an affordable yet reliable solution for organizations seeking advanced AI capabilities without the financial burden of premium models.

Key features of MiniMax M2.7 include:

- A large parameter count that enables handling of complex tasks with precision.

- A design that prioritizes accessibility and operational efficiency.

- Compatibility with limited budgets and compute-constrained environments.

This combination of features positions MiniMax M2.7 as an attractive option for businesses and researchers looking for cost-effective AI solutions that do not compromise on functionality.

Addressing Compute Constraints and Market Needs

Global disparities in compute availability play a critical role in shaping AI adoption. For instance, China faces a 2-3 generation lag in GPU technology compared to the United States, which limits access to innovative hardware. In contrast, U.S.-based companies like OpenAI and Meta benefit from advanced VR Rubin chips, which enhance their efficiency and throughput.

MiniMax M2.7 remains competitive by using the older Hopper architecture, which is well-suited to mid-tier compute demands. This strategic decision ensures its relevance in markets where access to the latest GPU technology is limited, offering a cost-efficient alternative for organizations constrained by hardware availability. By addressing these disparities, MiniMax M2.7 plays a pivotal role in providing widespread access to AI access across diverse markets.

Check out more relevant guides from our extensive collection on MiniMax that you might find useful.

- MiniMax M2.7 Self-Evolving AI Model: Key Breakthroughs

- Minimax 2.5 Preview: Stronger Tools for Front-End Work & Research

- MiniMax M2.5 Always-On Agents: Lower Run Costs and Faster Outputs

- MiniMax M2.7 AI Model Tested: Beats Opus 4.6, 50x Cheaper

- MiniMax M2.1 vs Gemini 3 Flash : Practical Agent Power for Less

- Open-Weight AI Models vs Premium : Miniax 2.1 and GLM 4.7

- Minimax M2.5 vs GPT-5.2 vs Claude Opus: Performance & Cost Comparison

- MiniMax AI vs Claude Cowork 90% Less and Cross-Platform

- Minimax AI Video Generator

- Miniax M2.1 Guide : Setup, API Costs & Common Uses

Agentic Applications and Workflow Automation

MiniMax M2.7 excels in agentic applications, such as OpenClaw, due to its 200,000-token context window. This feature allows the model to process extensive contextual information, making it ideal for tasks that require nuanced understanding and decision-making. Its ability to handle large-scale data inputs enhances its suitability for complex workflows.

The model also drives significant advancements in automation within machine learning workflows, reducing manual effort by 30-50%. For example, the transition from M2.5 to M2.7 was completed in just 34 days, demonstrating the efficiency gains achieved through automation. Additional benefits include:

- Faster model release cycles, allowing quicker deployment of AI solutions.

- Integration of agents for hyperparameter tuning, improving model performance.

- Streamlined workflow processes, reducing operational bottlenecks.

These capabilities underscore MiniMax M2.7’s role in enhancing machine learning engineering practices, making it a valuable tool for organizations aiming to optimize their AI development pipelines.

Technical Design and Performance

MiniMax M2.7 employs a full attention mechanism, which enables its large context window but also demands significant memory resources. This design enhances its ability to handle complex and contextually rich tasks, making it a powerful tool for applications requiring detailed analysis and decision-making.

However, this approach comes with trade-offs. Hybrid architectures, such as Neotron, offer more compute-efficient scaling, posing a challenge to MiniMax M2.7 in terms of resource optimization. Despite this, MiniMax M2.7 has demonstrated strong performance, ranking fourth on the Pinchbench benchmark for OpenClaw use cases. This ranking highlights its effectiveness in specific applications, even when competing with more advanced architectures.

The Bifurcation of the AI Market

The AI market is increasingly divided between cost-efficient models like MiniMax M2.7 and premium frontier models such as GBD 5.4 and Opus 4.7. While frontier models deliver faster inference speeds and higher intelligence, their annual costs range from $23,000 to $39,000, making them inaccessible to many organizations.

This bifurcation creates two distinct adoption paths:

- Organizations with limited resources prioritize cost-efficient models like MiniMax M2.7 to meet their AI needs without exceeding their budgets.

- Organizations with greater financial flexibility opt for frontier models to achieve superior performance and advanced capabilities.

This division underscores the importance of models like MiniMax M2.7 in making sure that AI technology remains accessible to a broader range of users, particularly those operating in resource-constrained environments.

Looking Ahead: The Future of MiniMax

As the demand for cost-efficient AI solutions continues to grow, MiniMax models are expected to play an increasingly prominent role in the market. Ongoing constraints in compute supply further highlight the need for models like MiniMax M2.7, which balance performance and affordability to meet the needs of diverse users.

Future iterations, such as the anticipated M3 model, are likely to introduce architectural and efficiency improvements, potentially reshaping the competitive landscape. These advancements could further enhance MiniMax’s appeal, solidifying its position as a key player in the AI market. For now, MiniMax M2.7 remains a compelling choice for organizations navigating the challenges of compute availability and cost-efficiency in their AI adoption strategies.

Media Credit: Caleb Writes Code

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.