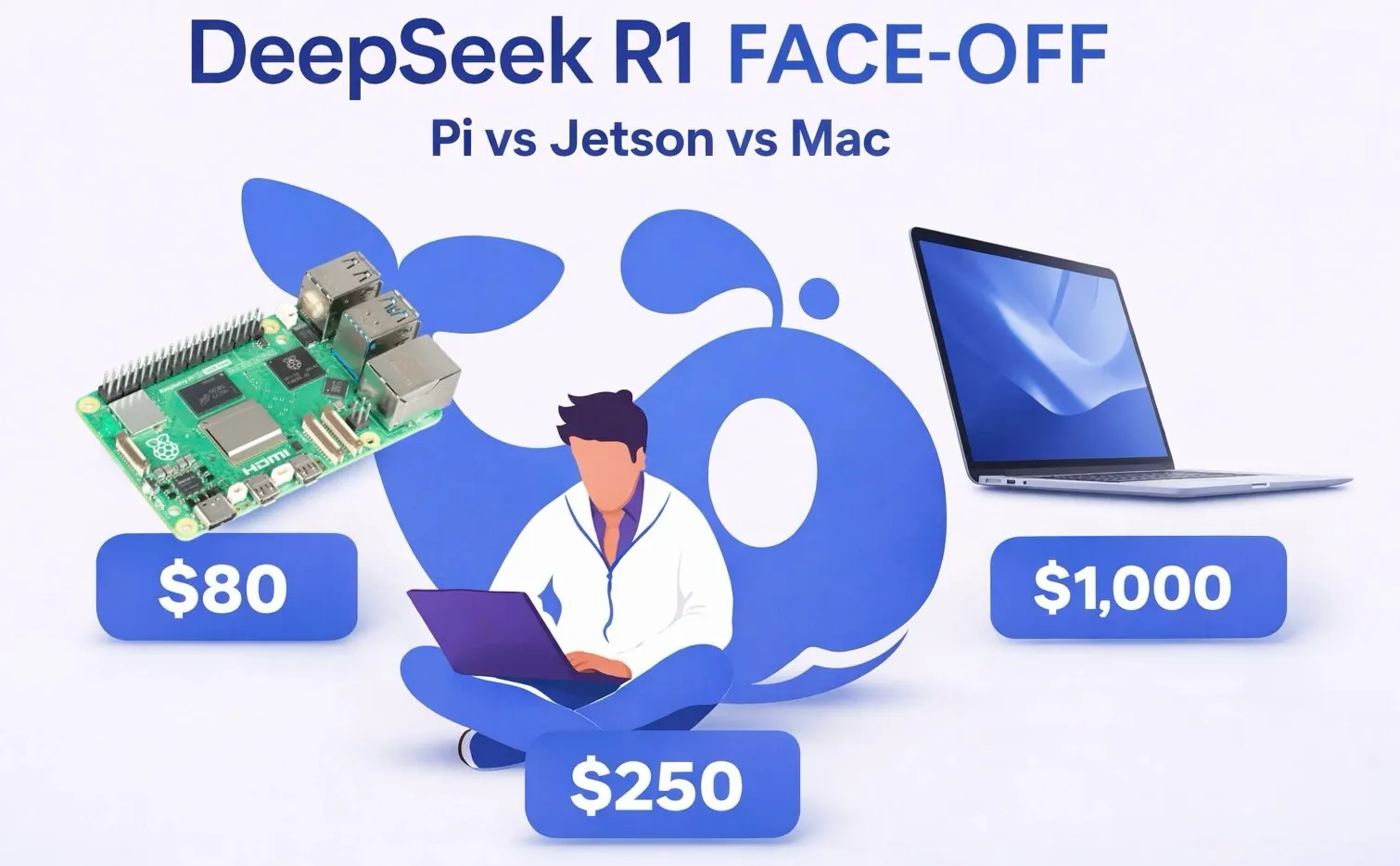

Running AI models locally can reveal surprising insights about cost, performance and usability. In her latest explainer, Joyce Lin examines how the DeepSeek R1, a 1.5-billion-parameter reasoning-focused AI model, performs across three devices: the $80 Raspberry Pi 5, the $250 Nvidia Jetson Orin Nano and the $1000 MacBook Air M3. Using the Olama framework for consistent setup and testing, the experiment highlights key differences in processing speeds, with the MacBook Air achieving a remarkable 72 tokens per second compared to the Raspberry Pi’s modest 9 tokens per second. Despite these disparities, the model maintained consistent task accuracy across all devices, underscoring its reliability even on budget-friendly hardware.

Explore how each device balances cost and capability, from the Pi’s accessibility for hobbyists to the Jetson’s AI-focused design and the MacBook’s premium performance. Gain insight into how quantization techniques like Q4 and Q8 can optimize the model for resource-limited setups and consider how these findings might inform your own AI projects. Whether you’re experimenting on a budget or optimizing for speed, this breakdown offers practical takeaways for deploying AI locally.

What is DeepSeek R1?

TL;DR Key Takeaways :

- DeepSeek R1 is a 1.5-billion-parameter open source AI model optimized for reasoning tasks, offering a balance between computational efficiency and task performance and is freely available under the MIT license.

- The experiment tested DeepSeek R1 on three devices, Raspberry Pi 5, Nvidia Jetson Orin Nano and MacBook Air M3, highlighting trade-offs between cost, performance and usability for local AI deployment.

- Performance varied significantly, with the MacBook Air M3 achieving the fastest speed (72 tokens/second), followed by the Nvidia Jetson Orin Nano (22 tokens/second) and Raspberry Pi 5 (9 tokens/second).

- Task accuracy was consistent across all devices, demonstrating the model’s reliability, with differences primarily in processing speed rather than output quality.

- DeepSeek R1 supports advanced techniques like quantization for scalability, making it adaptable to various hardware configurations and suitable for users with diverse needs and budgets.

DeepSeek R1 is a compact AI model specifically designed for reasoning tasks such as solving math problems, generating code and tackling logic puzzles. With its 1.5 billion parameters, it achieves a balance between computational efficiency and task performance. Its relatively small size allows it to operate on devices with limited RAM while maintaining reasonable speeds. Released under the MIT license, it is freely accessible to developers and researchers, making it a practical tool for a wide range of applications. The model’s optimization for reasoning tasks makes it particularly well-suited for local deployment, offering flexibility across various hardware configurations.

The Devices: A Range of Costs and Capabilities

The experiment evaluated DeepSeek R1 on three devices, each representing a distinct price-performance tier. These devices highlight the diversity in hardware options available for running AI models locally:

- Raspberry Pi 5 ($80): A highly affordable, entry-level device. While its computational power is limited, it demonstrates the feasibility of running AI models on budget-friendly hardware, making it an excellent choice for beginners and hobbyists.

- Nvidia Jetson Orin Nano ($250): A mid-range device engineered specifically for AI workloads. Equipped with a GPU and neural processing cores, it offers a balanced combination of cost and performance, catering to more demanding AI applications.

- MacBook Air M3 ($1000): A premium consumer laptop featuring advanced hardware. It delivers the fastest performance among the three devices, making it ideal for developers requiring high-speed processing for complex tasks.

Uncover more insights about DeepSeek in previous articles we have written.

- DeepSeek V4 Lite Leak Points to Fast, Clean SVG Code

- DeepSeek V4 Adds Native Multimodal Input and 1M Token Context Window

- The Next Era of AI: ChatGPT 5.5 & DeepSeek’s Massive Model

- DeepSeek V4 Leak Hints at Faster Long-Code Performance Gains

- Minimax 2.5 Preview: Stronger Tools for Front-End Work & Research

- DeepSeek V4 Leak Signals 2 Stealth Models on OpenRouter

- DeepSeek Self-Improving AI Agents: Memory, Reasoning & Benchmark Gaps

- AI News : DeepSeek V4 Aims at Long Code & February Launch

- DeepSeek Engram Splits Recall from Reasoning for Faster LLMs

- Gemini 3.5 Leak Details, Early Tests Show Mixed Performance

How the Experiment Was Conducted

To ensure a fair comparison, the same setup process was applied across all devices. The Olama framework, an open source tool for deploying AI models, was used to install and run DeepSeek R1. Identical prompts and configurations were employed, allowing for a direct evaluation of performance and usability. This standardized approach ensured that the results reflected the inherent capabilities of each device rather than differences in setup or configuration.

Performance Results: Speed Matters

The experiment revealed significant variations in inference speed across the devices, highlighting the impact of hardware capabilities on performance:

- MacBook Air M3: The fastest performer, achieving a processing speed of 72 tokens per second. This makes it highly suitable for real-time applications and complex reasoning tasks where speed is critical.

- Nvidia Jetson Orin Nano: Delivered a moderate performance of 22 tokens per second. While slower than the MacBook, it remains a practical option for most AI applications, offering a good balance between cost and capability.

- Raspberry Pi 5: The slowest device, processing 9 tokens per second. Despite its limited speed, it successfully ran the model, demonstrating its potential as a low-cost platform for AI experimentation and learning.

Task Accuracy: Consistent Outputs Across Devices

DeepSeek R1 was tested on a variety of reasoning tasks, including solving math problems, generating code and addressing logic puzzles. The outputs were consistent across all three devices, with only minor variations due to the probabilistic nature of language models. The primary difference lay in the time required to generate responses, with the MacBook Air consistently outperforming the other devices in terms of speed. This consistency in task accuracy underscores the model’s reliability, regardless of the hardware used.

Cost vs. Performance: Finding the Right Fit

The experiment highlights the trade-offs between cost and performance, offering insights into which device might be the best fit for different users:

- Raspberry Pi 5: Ideal for beginners, hobbyists, or those on a tight budget. While its performance is limited, it provides an accessible platform for learning and experimenting with AI.

- Nvidia Jetson Orin Nano: A balanced choice for users seeking reasonable performance at a mid-range price. It is well-suited for most AI projects without requiring a significant financial investment.

- MacBook Air M3: The top-performing device, perfect for developers who already own the hardware or need faster processing speeds for demanding tasks. Its high performance justifies its premium price for those with advanced requirements.

Scalability and Flexibility: Beyond the Basics

DeepSeek R1 supports advanced techniques such as Q4 and Q8 quantization, which enhance performance on devices with limited hardware resources. These techniques allow the model to adapt to a variety of hardware configurations, making it more versatile for users with different needs. While this experiment focused on the 1.5-billion-parameter model, larger models like Mistl 7B or Llama 3 8B offer improved reasoning capabilities. However, these larger models come with increased computational demands, requiring more powerful hardware. This scalability ensures that users can tailor their AI deployments to match their specific requirements and constraints.

Key Takeaways

This experiment demonstrates the feasibility of running AI models locally across a wide range of hardware. Whether you are a beginner exploring AI on a budget or a developer seeking high performance, there is a device that meets your needs. The choice ultimately depends on factors such as budget, performance requirements and intended use cases. DeepSeek R1’s compact size, open source nature and optimization for reasoning tasks make it a versatile tool for exploring AI’s potential on local devices. By offering flexibility and scalability, it enables users to experiment, innovate and deploy AI solutions tailored to their unique circumstances.

Media Credit: Joyce Lin

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.