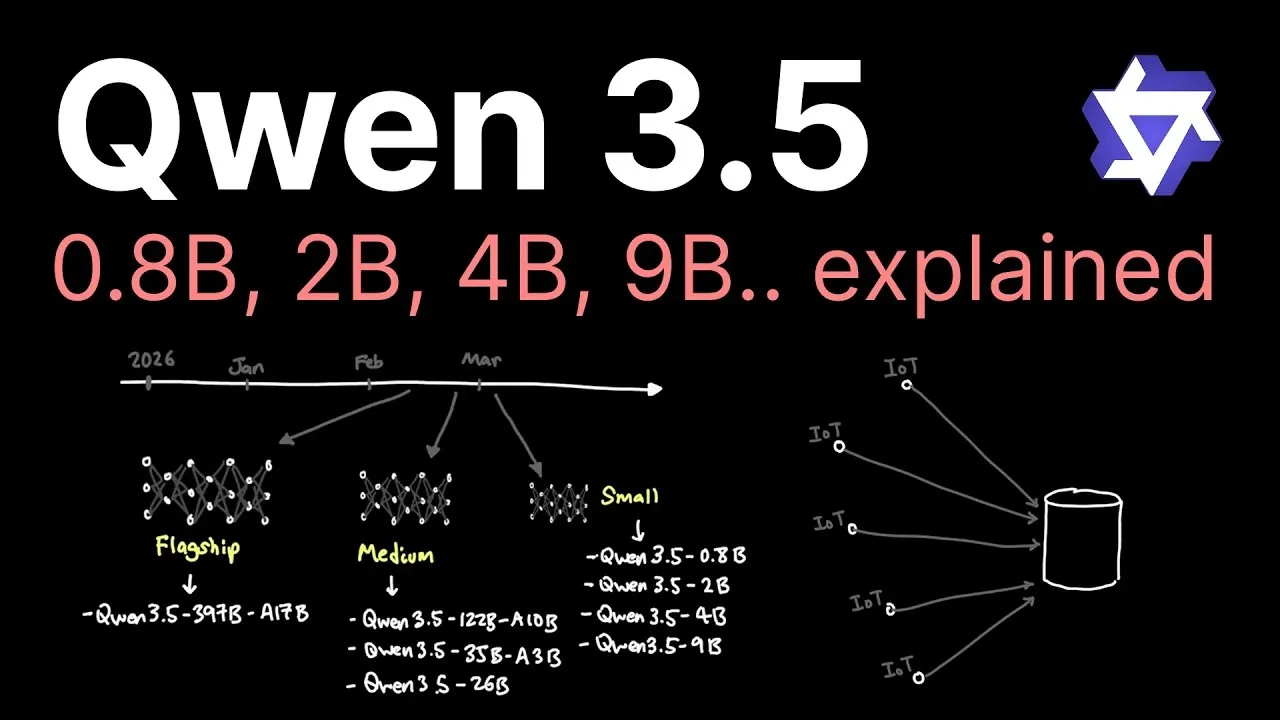

Alibaba’s Qwen 3.5 series introduces a compelling shift in AI development by focusing on smaller, efficient models optimized for edge devices. As highlighted by Caleb Writes Code, these models range from 800 million to 9 billion parameters, offering a balance between compactness and performance. For instance, the 800 million parameter variant is tailored for lightweight applications like IoT devices, while the 9 billion parameter model excels in complex tasks, rivaling larger systems in benchmarks such as MMLU. This approach prioritizes local computation, enhancing privacy and allowing offline functionality, which is particularly advantageous for consumer-grade hardware and resource-constrained environments.

In this overview, you’ll explore how Qwen 3.5 achieves high performance through innovations like refined training techniques and enhanced architecture. Key takeaways include the practical benefits of deploying these models in IoT ecosystems, such as real-time data analysis and anomaly detection and their adaptability to diverse use cases, from smartphones to industrial applications. Whether you’re interested in privacy-focused AI or scalable solutions for edge computing, this breakdown offers insights into how smaller models can meet the growing demands of modern AI deployment.

Alibaba’s Qwen 3.5 Smaller Models, Expansive Applications

TL;DR Key Takeaways :

- Alibaba’s Qwen 3.5 AI models prioritize smaller, efficient designs (800M to 9B parameters) for edge devices, challenging the industry trend of massive centralized systems.

- The models enable offline functionality, enhance privacy and support diverse applications, from IoT devices to high-performance computing.

- Innovations like enhanced architecture, refined training techniques and high-quality datasets allow smaller models to rival larger systems in performance.

- Optimized for edge computing, Qwen 3.5 reduces latency, improves responsiveness and supports real-time, localized AI processing for tasks like anomaly detection and image recognition.

- Alibaba’s focus on compact, versatile AI models positions it as a leader in privacy-focused and hardware-compatible AI solutions, shaping the future of AI deployment.

The Qwen 3.5 series offers a diverse range of model sizes, catering to various computational needs without compromising on performance. Unlike many AI labs that prioritize developing massive models, Alibaba adopts an inclusive strategy, making sure that smaller models remain powerful and versatile.

- The 9 billion parameter model delivers high performance comparable to larger counterparts, excelling in benchmarks like MMLU for complex tasks.

- The 800 million parameter model is optimized for lightweight applications, making it ideal for resource-constrained environments such as IoT devices.

This flexibility allows developers to select models that align with specific use cases, from high-performance computing to basic AI tasks on low-power devices. By addressing a broad spectrum of needs, Qwen 3.5 ensures that AI technology is accessible to a wider audience, including industries and consumers with limited computational resources.

Innovations Driving Efficiency

The efficiency of Qwen 3.5 stems from several key advancements that enable smaller models to achieve results traditionally associated with larger systems:

- Enhanced architecture: The models feature optimized designs that maximize computational efficiency and intelligence density, making sure high performance in compact forms.

- Refined training techniques: Advanced methods allow smaller models to perform complex tasks, bridging the gap between size and capability.

- High-quality datasets: The use of curated, diverse datasets ensures that the models are accurate, versatile and capable of handling a wide range of applications.

For instance, the 9 billion parameter model excels in natural language understanding and multimodal tasks, rivaling larger predecessors while requiring fewer computational resources. These innovations not only reduce hardware demands but also make AI more accessible to devices with limited capabilities, such as smartphones and IoT systems.

Learn more about Qwen with other articles and guides we have written below.

- Interactive Solar System Test, Sonnet 4.5 vs Qwen 3.5 35B

- Qwen 3.5 vs Claude Opus 4.5 vs Gemini 3 Pro: Benchmarks Compared

- Qwen3-TTS vs ElevenLabs : Voice Cloning & Real-Time Streaming

- Qwen 3 TTS Voice Cloning Guide 2026 : Free Tools & Setup Tips

- Qwen 3 vs GPT-4.1: How Alibaba’s AI is Changing the Game

- ChatGPT 5 vs Claude vs Qwen : Best AI Models for App Dev in 2025?

- Qwen TTS Voice Cloning in 3 Seconds: Setup, Limits, and Best Uses

- Kimi K2.5 for Teams: Parallel Agents Cut Time and Token Costs

- Qwen 3 AI Models : Features, Benefits & Why They Matter in 2025

- What is Alibaba Qwen and its 6 LLM AI models?

Optimized for Edge Devices

A defining feature of Qwen 3.5 is its optimization for edge devices, allowing local computation on consumer-grade hardware. This approach offers several distinct advantages:

- Enhanced privacy: By processing data locally, the models minimize the need to transmit sensitive information to external servers, reducing privacy risks.

- Offline functionality: The ability to operate without internet access makes these models ideal for remote or secure environments.

For example, the 9 billion parameter model can power advanced AI features on a smartphone, while the 800 million parameter model is well-suited for basic AI tasks on IoT devices. This adaptability ensures that Qwen 3.5 can meet the needs of a wide range of users, from individual consumers to industrial applications. By allowing real-time, localized AI processing, these models enhance responsiveness and reduce latency, making them particularly valuable for time-sensitive tasks.

Expanding the Potential of IoT and Edge Computing

The Qwen 3.5 series highlights the growing role of AI in IoT and edge computing, where smaller, efficient models are essential for on-device computation. The 800 million parameter variant, in particular, is well-suited for IoT ecosystems, allowing tasks such as:

- Real-time data analysis for immediate insights

- Anomaly detection to identify irregular patterns or issues

- Image recognition for applications like security and automation

By processing data directly on devices, these models reduce latency and improve responsiveness, making them ideal for applications that require immediate action. Additionally, the inclusion of multimodal capabilities, such as handling both text and images, broadens the scope of potential use cases. For instance, a smart home system powered by Qwen 3.5 could seamlessly integrate voice commands with camera feeds, creating a more intuitive and efficient user experience.

Shaping the Future of AI Deployment

Alibaba’s focus on smaller, efficient models positions it to address the growing demand for AI in edge computing and privacy-focused applications. This strategy contrasts with the approach of many AI labs, which prioritize large-scale models designed for centralized cloud deployment. The Qwen 3.5 series demonstrates that smaller models can deliver comparable performance while offering unique advantages:

- Improved privacy: Local computation reduces the need for data transmission, enhancing security.

- Reduced latency: Real-time processing ensures faster responses for critical applications.

- Wider hardware compatibility: Smaller models can run on a broader range of devices, from smartphones to IoT systems.

As the AI landscape evolves, these models could redefine industry standards, balancing efficiency with capability. By prioritizing accessibility and versatility, Qwen 3.5 sets a new benchmark for AI deployment, particularly in environments where hardware limitations and privacy concerns are paramount.

Building on a Legacy of Innovation

The Qwen 3.5 series builds on the foundation established by its predecessors, such as Qwen 2 and Qwen 3. These earlier models laid the groundwork for Alibaba’s commitment to creating versatile and accessible AI solutions. Over time, advancements in training data quality, stabilization techniques and architectural design have enabled significant performance improvements.

For example, the intelligence density of Qwen 3.5 surpasses that of its predecessors, allowing smaller models to achieve results that were previously unattainable. This progression underscores Alibaba’s dedication to pushing the boundaries of what compact AI models can accomplish, making sure that they remain competitive in an increasingly demanding market.

Anticipating Future Developments

The Qwen 3.5 series sets the stage for future advancements in AI, as competition intensifies with upcoming releases from other labs, such as OpenAI’s GPT-5.3. Alibaba’s focus on quantization and compact models positions it to address a wide range of use cases, from consumer devices to industrial IoT systems. Potential future developments may include:

- Even smaller models with enhanced multimodal capabilities

- Improved efficiency for large-scale industrial IoT applications

- Broader integration into consumer electronics and smart devices

These innovations could further expand the possibilities for AI at the edge, making it more accessible, versatile and impactful than ever before. By continuing to prioritize efficiency and adaptability, Alibaba is poised to play a leading role in shaping the future of AI deployment.

Media Credit: Caleb Writes Code

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.