AI-generated code often mirrors the quality of the processes guiding it, making structured workflows and proactive oversight essential for success. In a detailed walkthrough, Jaymin West explores how top engineers address the root causes of poor AI outputs rather than merely fixing surface-level issues. One key strategy involves implementing pre-commit hooks to enforce coding standards and block problematic changes early in the pipeline. This approach not only prevents technical debt but also ensures that AI remains an asset rather than a liability in development workflows.

In this guide, you’ll learn how to integrate quality gates to enforce rigorous testing and linting, adopt task decomposition to improve agent accuracy and establish clear boundaries for multi-agent systems. These practices, combined with a focus on standardization and traceability, help create a consistent and reliable environment for AI-driven development. By the end, you’ll have actionable strategies to align AI outputs with your project’s goals while maintaining high-quality standards across your workflows.

Optimizing AI-Generated Code

TL;DR Key Takeaways :

- Recognize that poor AI-generated code often stems from engineering practices rather than flaws in the AI model and address root causes to prevent technical debt.

- Implement robust quality control tools such as pre-commit hooks, quality gates, hard blocks and standardization to ensure high-quality AI-driven workflows.

- Adopt effective testing strategies, including anti-mocking and comprehensive test coverage, to validate code functionality and minimize bugs.

- Use advanced optimization techniques like task decomposition, traceability, detailed specifications and multi-agent workflows to enhance AI reliability and performance.

- Standardize workflows, outputs and tool usage to promote consistency, collaboration and efficiency in AI-driven software development.

Shifting the Mindset: Addressing the Core Problem

One of the most important steps in improving AI-generated code is recognizing that poor outputs often result from engineering practices rather than flaws in the AI model itself. Instead of attempting to patch subpar code, you should focus on identifying and addressing the root causes of the issue. Restarting the process when necessary ensures that problems are resolved at their source, preventing the accumulation of technical debt over time.

AI agents should be treated as tools that require precise guidance and oversight. Without clear instructions and well-defined parameters, their outputs may deviate from your project’s quality standards. By shifting your mindset to view AI as an extension of your engineering practices, you can ensure that its contributions align with your goals and expectations.

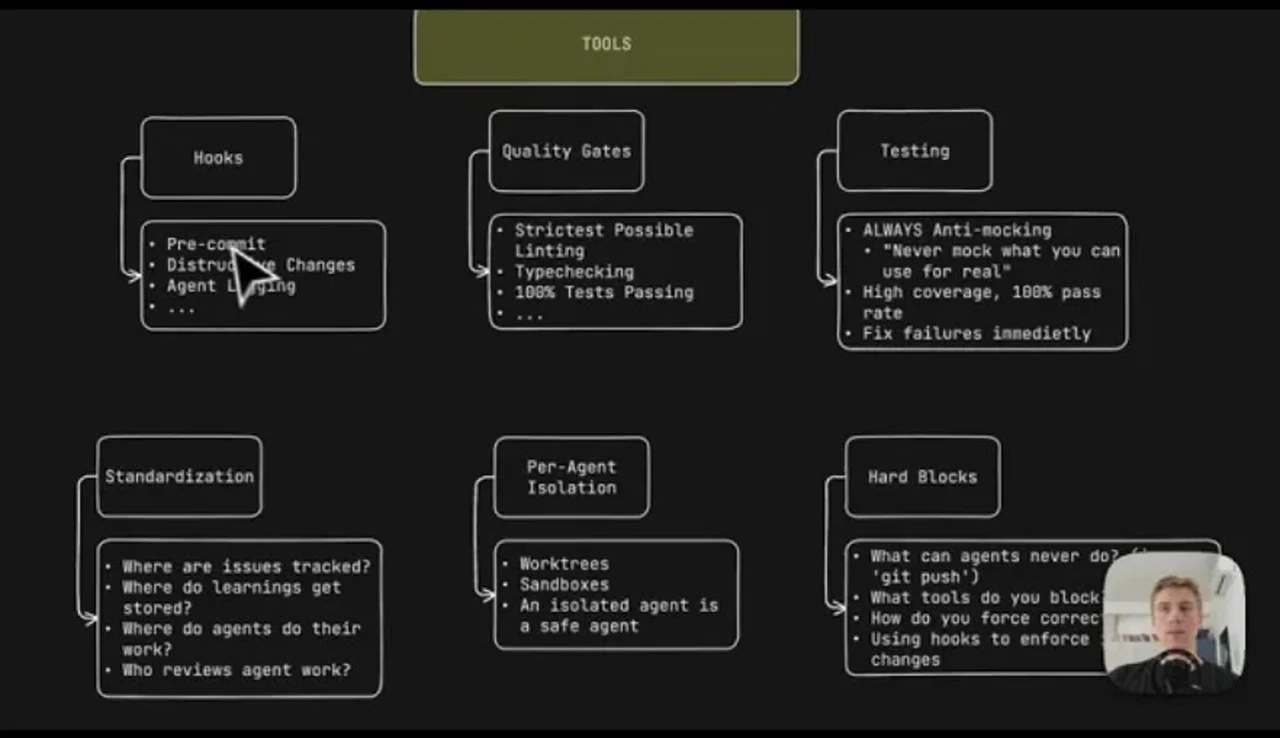

Essential Tools for Quality Control

Maintaining high standards in AI-driven workflows requires a robust set of tools designed to enforce rules, track changes and prevent errors. These tools form the backbone of quality control, making sure that AI-generated code meets your expectations. Key tools include:

- Pre-Commit Hooks: These tools log actions, enforce coding standards and block potentially destructive changes. They act as the first line of defense against low-quality outputs, making sure that errors are caught early in the process.

- Quality Gates: By requiring linting, type-checking and passing all tests, quality gates ensure that only high-quality code progresses through the development pipeline.

- Hard Blocks: These restrictions prevent unauthorized actions, such as direct pushes to remote repositories, making sure that critical quality checks cannot be bypassed.

- Standardization: Centralizing issue tracking, workflows and agent learnings promotes consistency across projects and teams, reducing variability in outputs.

- Agent Isolation: Keeping agents independent minimizes conflicts in multi-agent workflows and reduces errors caused by overlapping tasks or competing priorities.

By integrating these tools into your workflow, you can establish a strong foundation for quality control, making sure that AI-generated code adheres to your standards and requirements.

How Top Engineers Stop AI Agents From Writing Slop

Below are more guides on AI coding from our extensive range of articles.

- 7 Awesome Antigravity Features That Give Vibe Coders the Edge

- Kimi 2 5 Multimodal Ai Model

- Open Source AI Agents: Cost Cuts, Setup Needs, and Best Practices

- OpenAI’s AI Model Almost Wins AtCoder 2025: What It Means for Coders

- Local AI Coding Workflow 2026: LM Studio Linking and Claude Code Setup

- Techniques That Get More from AI Coding Assistants Today

- Auto Claude: Free Open Source AI Coding Assistant with GitHub

- Gemini CLI : Google’s Open-Source AI Coding Assistant

- Local AI Coding Guide for 2026 : GPUs, Models, and Setup Tips

- Opus 4.5 vs GPT-5.2 : AI Coding Build Results, Strengths & Weaknesses

Testing Philosophy: Anti-Mocking and Comprehensive Coverage

Effective testing is a cornerstone of AI engineering. Adopting an anti-mocking approach ensures that tests validate actual code functionality rather than relying on simulated behaviors. This approach provides a more accurate assessment of how the code will perform in real-world scenarios.

High test coverage is equally critical. Requiring a 100% pass rate before allowing code to progress minimizes the risk of bugs and ensures that AI-generated outputs meet your quality standards. Comprehensive testing not only improves the reliability of the code but also builds confidence in the AI’s ability to contribute effectively to your projects.

Advanced Strategies for Optimization

Beyond foundational practices, advanced techniques can further enhance the reliability and performance of AI-driven coding workflows. These strategies are designed to address complex challenges and maximize the potential of AI agents:

- Traceability: Tracking agent actions, changes and timestamps provides accountability and simplifies the process of identifying and resolving errors.

- Task Decomposition: Breaking complex tasks into smaller, focused prompts improves accuracy and reduces the likelihood of errors. This approach allows agents to tackle manageable portions of a problem, leading to more reliable outputs.

- Pit of Success: Providing high-quality input ensures that recursive agent outputs remain consistent and aligned with project goals. Clear and precise prompts are essential for achieving this outcome.

- Detailed Specifications: Clear, unambiguous instructions reduce ambiguity and help agents produce optimal results. The more specific the guidance, the better the output.

- Multi-Agent Workflows: Coordinating multiple agents to decompose tasks, review each other’s work and perform quality checks ensures that every step of the process meets your standards.

- Agent Scope: Defining clear boundaries for agent tasks minimizes errors and ensures that each agent focuses on its specific responsibilities. This approach reduces overlap and improves efficiency.

These advanced strategies enable you to refine your workflows and achieve greater consistency, reliability and efficiency in AI-driven development.

Standardizing Workflows for Consistency

Uniformity is essential for maintaining consistency in AI-driven development. Standardizing agent outputs, prompt structures and tool usage creates a cohesive framework that streamlines processes and reduces variability. By establishing clear decision points for human intervention in multi-agent systems, you can enhance both reliability and accountability.

Standardized workflows also promote collaboration among team members, making sure that everyone operates within the same framework. This approach fosters a shared understanding of best practices and reduces the risk of miscommunication or misalignment. By prioritizing standardization, you can create a more efficient and effective development environment.

Maximizing the Potential of AI in Software Development

Preventing AI agents from producing poor-quality code requires a combination of mindset shifts, robust tools and advanced techniques. Strategies such as pre-commit hooks, quality gates, task decomposition and detailed specifications provide a solid foundation for achieving reliable, maintainable results. Tailoring these practices to your specific needs and fostering collaboration among team members will further enhance the effectiveness of your AI engineering efforts.

By adopting these strategies, you can fully use the potential of AI in software development while minimizing the risks associated with technical debt. With the right approach, AI can become a powerful tool for innovation, efficiency and success in your projects.

Media Credit: Jaymin West

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.