What if we’ve been looking at AI all wrong? Nate B Jones explains how NVIDIA’s CES 2026 announcements weren’t just about faster chips or sleeker designs, they signaled a seismic shift toward the industrialization of AI infrastructure. This isn’t just tech jargon; it’s a blueprint for how AI will scale to meet the demands of a world increasingly reliant on intelligent systems. Yet, while NVIDIA unveiled its vision of “AI factories” capable of powering everything from autonomous vehicles to real-time analytics, much of the buzz focused on the hardware specifics, missing the bigger picture. The real story isn’t about chips, it’s about the ecosystems they’re building and the industries they’re poised to transform.

In this overview, we’ll unpack what AI industrialization truly means and why it’s a pivotal moment for the future of technology. From NVIDIA’s new Reuben platform to the rising competition among AI infrastructure providers, we’ll explore the forces reshaping the landscape of innovation. You’ll discover how concepts like inference optimization and scalable AI systems are more than technical achievements, they’re the foundation of a world where AI becomes as ubiquitous as electricity. Whether you’re a tech enthusiast, a business leader, or simply curious about where AI is headed, this breakdown will challenge the way you think about the future. Because sometimes, the most important shifts are the ones hiding in plain sight.

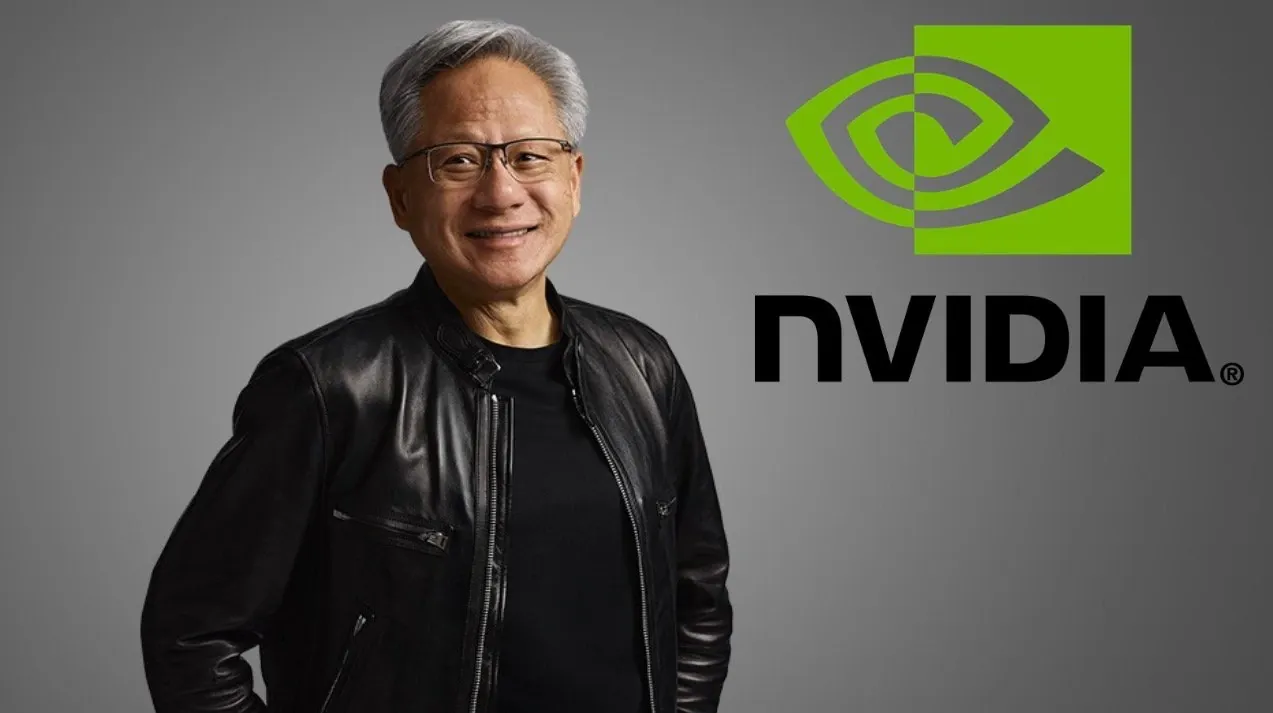

NVIDIA’s AI Factory Vision

TL;DR Key Takeaways :

- NVIDIA introduced the concept of “AI factories” at CES 2026, emphasizing scalable, cost-efficient systems to support AI infrastructure at unprecedented scales, marking a shift from chip performance to industrialized AI systems.

- The Reuben platform, NVIDIA’s AI factory blueprint, integrates advanced technologies like the Vera CPU, Reuben GPU, NVLink 6 interconnect, and ConnectX9 SuperNIC to optimize AI inference and address scaling challenges.

- Inference optimization is critical as it represents the most resource-intensive aspect of AI operations, with NVIDIA’s solutions focusing on reducing costs, improving efficiency, and making sure reliability for businesses adopting AI.

- OpenAI has secured significant infrastructure and memory supply chain partnerships with NVIDIA, AMD, AWS, and others, making sure over 26 gigawatts of AI capacity to meet growing demand and maintain competitiveness.

- The industrialization of AI extends beyond data centers, driving advancements in robotics, autonomous vehicles, and consumer devices, while fostering a collaborative ecosystem of suppliers to meet global AI infrastructure needs.

What is AI Industrialization?

AI has transitioned from experimental projects and niche applications into a new phase of industrialization. This phase prioritizes the development of scalable, always-on systems capable of managing massive workloads efficiently. At CES 2026, the focus shifted from individual chip performance to the infrastructure required to support AI at scale, marking a pivotal moment for the industry.

Key factors driving this shift include:

- Rising demand for AI services, such as conversational AI, real-time analytics, and generative models.

- The need for systems that are cost-effective, power-efficient, and highly reliable.

- Increasing complexity in AI workloads, necessitating advanced infrastructure solutions.

This industrialization represents a turning point as companies race to develop AI factories capable of meeting these demands while maintaining economic viability. These factories are not just about hardware; they are about creating ecosystems that can support the growing role of AI in society.

NVIDIA’s Reuben Platform: A Blueprint for AI Factories

At the core of NVIDIA’s strategy is the Reuben platform, a rack-scale AI factory system designed to optimize inference at scale. This platform integrates innovative technologies to address the challenges of scaling AI infrastructure effectively. Key components of the Reuben platform include:

- The Vera CPU and Reuben GPU, which deliver high-performance computing tailored for AI workloads.

- NVLink 6 interconnect, allowing seamless and high-speed data transfer across systems.

- ConnectX9 SuperNIC, providing advanced networking capabilities for efficient communication.

A standout feature of the Reuben platform is its inference context memory, which significantly improves memory management and data movement efficiency. By addressing bottlenecks in scaling, this platform ensures that AI systems can handle increasingly complex workloads without compromising performance or reliability. For businesses, this means a practical solution to optimize the cost and efficiency of AI inference, making it a cornerstone of future AI infrastructure.

NVIDIA’s Plan for Factory-Scale AI Systems

Enhance your knowledge on AI Industrialization by exploring a selection of articles and guides on the subject.

- Humanoid robots tested at BMW Group Plant Spartanburg

- Neo Humanoid Robot: Overhyped AI or Game-Changer?

- New OpenAI ChatGPT-5 humanoid robot unveiled 1X NEO Beta

- Boston Dynamics Atlas Humanoid Robot Incredible Moves

- How the Optimus Tesla Bot humanoid robot is made

- Figure 03 Humanoid Robot: A New Era of AI-Powered Companions

- H1 Humanoid Robot sets new world record for running

- Figure-01 humanoid robot demonstrated making coffee and more

- Atlas humanoid robot receives upgrades from Boston Dynamics

- New Atlas Boston Dynamics fully electric humanoid robot unveiled

- New Tesla Optimus Gen 2 humanoid robot

Why Inference Optimization Matters

Inference, the process of running trained AI models, is now the most resource-intensive aspect of AI operations. It accounts for the majority of operational costs, making its optimization a critical focus for the industry. Companies are prioritizing efforts to reduce the cost per token, minimize latency, and ensure high reliability in their AI systems.

NVIDIA’s Reuben platform directly addresses these challenges by offering a scalable solution that balances performance with cost efficiency. This is particularly important as AI adoption accelerates across industries, from healthcare to finance, creating an urgent need for infrastructure capable of keeping pace with demand. By optimizing inference, businesses can unlock the full potential of AI while managing operational expenses effectively.

OpenAI’s Role in Scaling AI Infrastructure

OpenAI has emerged as a key player in the industrialization of AI, making substantial investments to secure the infrastructure necessary for its operations. The company has established large-scale partnerships with NVIDIA, AMD, Broadcom, AWS, and CoreWeave, securing over 26 gigawatts of AI capacity. These collaborations ensure that OpenAI can meet the escalating demand for its services while maintaining a competitive edge in the rapidly evolving AI landscape.

In addition to infrastructure, OpenAI has focused on securing memory supply chains. Agreements with Samsung and SK Hynix provide the DRAM required for its AI workloads, underscoring the importance of a robust, multi-supplier ecosystem. This approach highlights how the industrialization of AI is not just about hardware but also about creating resilient supply chains to support the growing complexity of AI operations.

Rising Competition and Alternative Ecosystems

While NVIDIA remains a dominant force in AI infrastructure, competition is intensifying. Companies like AMD and Broadcom are expanding their presence in the market, while Google continues to develop its TPU ecosystem. Additionally, hyperscalers and custom silicon solutions are gaining traction, offering tailored options for specific AI workloads.

This growing competition is fostering the development of alternative ecosystems that challenge NVIDIA’s dominance. As the AI industry evolves, it is becoming evident that no single supplier can meet the global demand for AI infrastructure. Instead, a diverse network of players will shape the future, driving innovation and creating a more dynamic and competitive landscape.

AI Beyond Data Centers: Expanding Horizons

The industrialization of AI infrastructure is not limited to data centers. It is allowing new applications across a wide range of fields, including robotics, autonomous vehicles, and consumer devices. The emergence of ambient intelligence—AI that operates in real-time and at scale, is transforming industries and enhancing everyday experiences.

For example:

- Autonomous vehicles depend on AI factories to process vast amounts of data in real time, making sure safety and operational efficiency.

- Robotics in manufacturing and healthcare is using AI to improve precision, adaptability, and productivity.

- Consumer devices are integrating AI to deliver smarter, more responsive, and personalized user experiences.

These advancements demonstrate the far-reaching impact of AI industrialization, extending its benefits beyond traditional computing environments and into the fabric of daily life.

The Road Ahead: A Collaborative Ecosystem

As the industrialization of AI progresses, NVIDIA is poised to remain a leader in the field. However, the future of AI infrastructure will be shaped by a collaborative ecosystem of suppliers, each contributing to the growing demand for scalable and efficient solutions. The AI factory model is set to redefine industries, embedding AI into every aspect of digital and physical experiences.

This evolution is not merely a technological shift, it represents a fundamental transformation in how businesses and societies operate. By understanding and embracing these changes, you can position yourself to thrive in an AI-driven future, where collaboration and innovation will be the keys to success.

Media Credit: AI News & Strategy Daily | Nate B Jones

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.