The landscape of wearable technology is on the verge of its most significant shift since the debut of the Apple Watch. While the Apple Vision Pro introduced the world to the concept of spatial computing, its bulk and price point kept it within the realm of enthusiasts and professionals. However, internal whispers from Cupertino, under the codename Project Atlas, suggest that Apple is now pivoting toward a far more accessible and sleek future: the Apple AR Glasses.

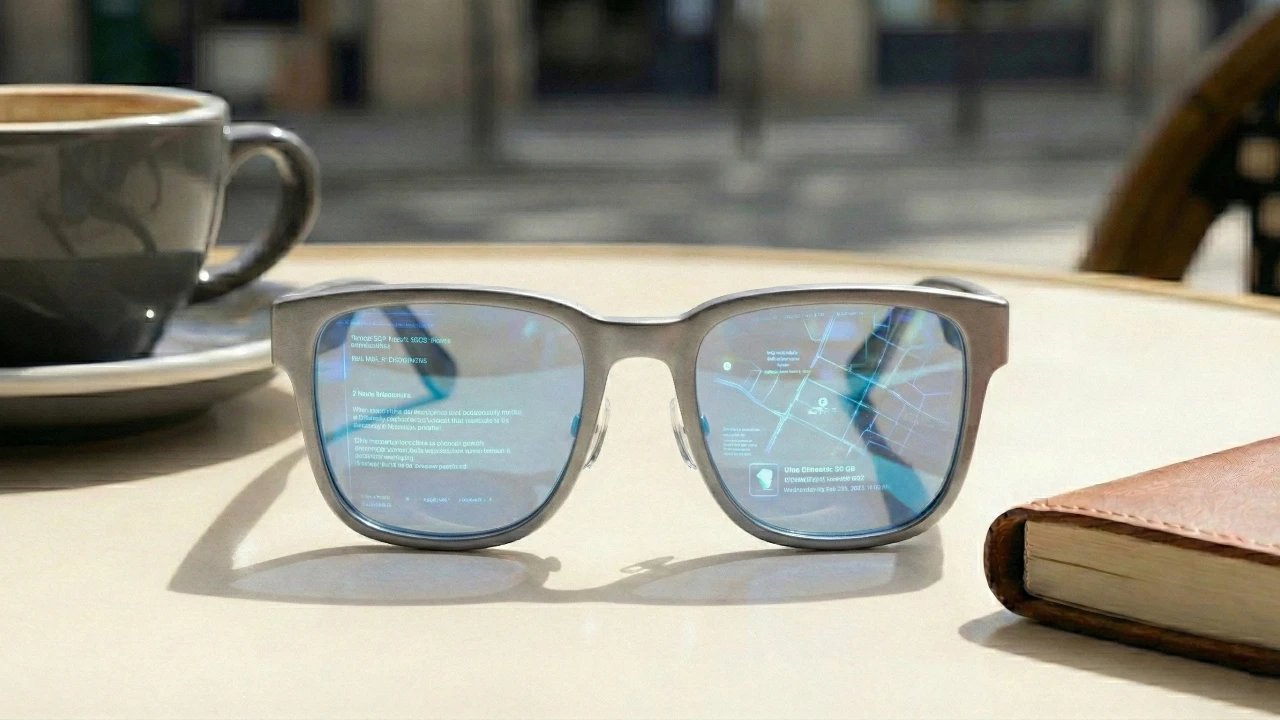

Commonly referred to in leaks as Apple Glass, these spectacles represent a strategic departure from the “helmet” form factor. Instead of replacing reality with a digital twin, Apple is reportedly building a device designed to enhance the world you already see, focusing on portability, fashion, and artificial intelligence.

The Vision for Project Atlas

For years, the industry expected Apple to jump straight into high-end augmented reality (AR) with transparent lenses and complex holographic overlays. However, recent leaks from supply chain analysts and internal sources indicate a more pragmatic two-stage rollout.

The first generation of Apple’s smart glasses—internally designated as N50—is rumored to be “AirPods with eyes.” This means the initial version may not feature a transparent display at all. Instead, it will focus on audio, high-fidelity cameras, and a suite of sensors. By forgoing the display in the first iteration, Apple can achieve a weight and battery life that makes the glasses indistinguishable from high-end designer frames.

A Phased Evolution

- Phase 1 (2026–2027): Smart glasses focused on AI, photography, and audio.

- Phase 2 (2028+): True AR glasses featuring transparent micro-OLED displays and waveguide technology.

Design and Aesthetic: Fashion First

Apple is acutely aware that something worn on the face is a fashion statement first and a computer second. Leaks suggest the company is collaborating with luxury eyewear manufacturers to ensure the frames are as stylish as they are functional.

Key Design Features

- Lightweight Materials: Expect a mix of high-grade plastics and lightweight metals like titanium.

- Personalization: Rumors point to multiple frame shapes (round, square, aviator) and various colorways to appeal to a broad demographic.

- Prescription Support: Much like the Vision Pro’s partnership with Zeiss, Apple Glass will offer custom prescription inserts that magnetically or mechanically snap into the frame.

- Discreet Integration: The cameras and sensors are expected to be hidden behind “smoked” glass or integrated into the hinges to avoid the “glasshole” aesthetic that plagued earlier industry attempts.

Rumored Technical Specifications: What’s Under the Hood?

The engineering challenge of Apple Glass is immense: fitting a computer into a frame that weighs less than 50 grams. To solve this, Apple is reportedly taking a “tethered” approach, offloading the heavy lifting to the user’s iPhone.

Predicted Hardware Specs

| Component | Rumored Specification |

|---|---|

| Processor | Custom SiP (System-in-Package) based on the Apple Watch S-Series |

| Connectivity | Ultra-Wideband (UWB), Wi-Fi 7, and Bluetooth 5.4 |

| Cameras | Dual high-resolution sensors for “Visual Intelligence.” |

| Audio | Directional beamforming speakers and “Studio Quality” microphones |

| Battery Life | 12–16 hours of “mixed usage” with a charging case |

| Interface | Touch-sensitive temples and advanced voice control |

The Silicon Strategy

Rather than using the power-hungry M-series or A-series chips found in Macs and iPhones, Apple is leaning toward the efficiency of its Watch silicon. The goal is to maximize battery life while providing enough power to process camera data and spatial audio. Complex AI tasks, such as real-time language translation or object recognition, will be sent wirelessly to the iPhone via a high-bandwidth, low-latency connection.

The Software Experience: Siri and Visual Intelligence

The heart of Apple Glass isn’t a screen, but an interface powered by Apple Intelligence. Without a traditional display, the user interaction moves from “touch and see” to “look and ask.”

Visual Intelligence

Leveraging the built-in cameras, Apple Glass will act as a third eye for the user. When you look at a restaurant, you can tap the frame and ask Siri for the menu or current rating. If you’re traveling in a foreign country, the glasses can “read” signs and whisper the translation directly into your ears.

Audio Privacy

A major concern with smart glasses is sound leakage. Apple is reportedly utilizing directional audio technology similar to the AirPods Pro, which beams sound directly into the wearer’s ear canal. This ensures that your private notifications or phone calls remain private, even in a crowded elevator. The goal is to move from a device you look at to a device that looks out at the world with you, providing context only when you need it.

Use Cases: How Apple Glass Changes the Day-to-Day

While the Vision Pro is for immersive work and entertainment, Apple Glass is for the “in-between” moments of life.

- Hands-Free Navigation: Instead of looking down at your phone for walking directions, the glasses will provide subtle haptic taps on the left or right temple and audio cues to guide you.

- Contextual Reminders: The glasses could recognize a person you’re meeting and provide a brief Siri whisper reminding you of their name or the last time you spoke.

- Memory Capture: Capture “First-Person” spatial photos and videos instantly without having to pull out a phone, allowing you to stay present in the moment.

- Health Tracking: Beyond the Apple Watch, the glasses could track head posture, blink rate (to monitor fatigue), and even potentially use sensors for hearing health.

Pricing and Market Positioning

If the Vision Pro is the “Mac Pro” of wearables, Apple Glass is intended to be the “iPhone.” To achieve mass adoption, Apple must price it competitively against current market leaders like the Meta Ray-Bans.

Current leaks suggest a starting price in the $600 to $700 range. This would position it as a premium accessory—more expensive than a pair of AirPods Max, but significantly more affordable than a MacBook or the Vision Pro. This pricing strategy encourages iPhone users to see the glasses as an essential “plus-one” to their existing ecosystem.

The Roadmap to Release

While development has been ongoing for nearly a decade, the timeline is finally narrowing. Production bottlenecks regarding the miniaturization of the battery and the thermal management of the frame have caused several delays, but the consensus among industry insiders is that a late 2026 announcement is the current target.

Apple traditionally uses its World Wide Developers Conference (WWDC) to announce new platforms, giving developers six to nine months to build “Vision-lite” apps before the hardware hits shelves. We can expect a teaser in June 2026, with a retail launch in the spring of 2027.

Conclusion: The End of the Smartphone Era?

Apple Glass isn’t just a new gadget; it’s a precursor to the “post-iPhone” world. By moving the digital interface from the palm of the hand to the line of sight, Apple is attempting to solve the problem of digital distraction. Instead of being buried in a screen, users are invited back into the physical world, supported by an invisible layer of intelligence.

While the first generation may lack the “wow” factor of a holographic display, its success will lie in its invisibility. If Apple can make a pair of glasses that people actually want to wear for twelve hours a day, they will have won the most important battle in the history of personal computing: the battle for the face.

| Feature | Apple Glass (Rumored 2026/27) | Meta Ray-Ban (Current Gen 2) | Meta Orion (AR Prototype) |

|---|---|---|---|

| Primary Display | None (HUD/AR in later models) | None (Audio & Camera only) | Holographic Waveguide |

| Field of View | N/A (Initial Gen) | N/A | 70 Degrees |

| Processor | Custom SiP (Apple Watch-based) | Snapdragon AR1 Gen 2 | Custom Silicon + Compute Puck |

| Connectivity | iPhone Tethered (Required) | Standalone (App Sync) | Wireless Compute Puck |

| AI Integration | Apple Intelligence (Siri) | Meta AI (Multimodal) | Meta AI + Environmental Sense |

| Camera | Dual High-Res (Spatial ready) | Single 12MP Ultra-wide | Multiple (Track + Scene) |

| Battery Life | All-day (Expected 12-16 hrs) | 4 Hours (Usage) / 32 hrs case | ~2 Hours (Active) |

| Weight | Est. < 50 grams | 48-50 grams | ~98 grams |

| Input Methods | Touch, Voice, Visual Intelligence | Voice, Touch, Gesture | Voice, Eye, Neural Wristband |

| Price | $600 – $700 (Rumored) | $299 (Starting) | N/A (Internal R&D) |

Have a look at some of our previous articles on the new Apple Glasses to find out more details on what Apple has planned.

- Apple Glasses Release Date, Features, and Pricing

- Apple Glasses: Everything We Know So Far

- Apple Glasses Leaks: What We Know So Far

- Apple Glasses Features, Pricing, and Release Date

- Apple Glasses in 2026 or 2027: Are We Getting Closer?

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.