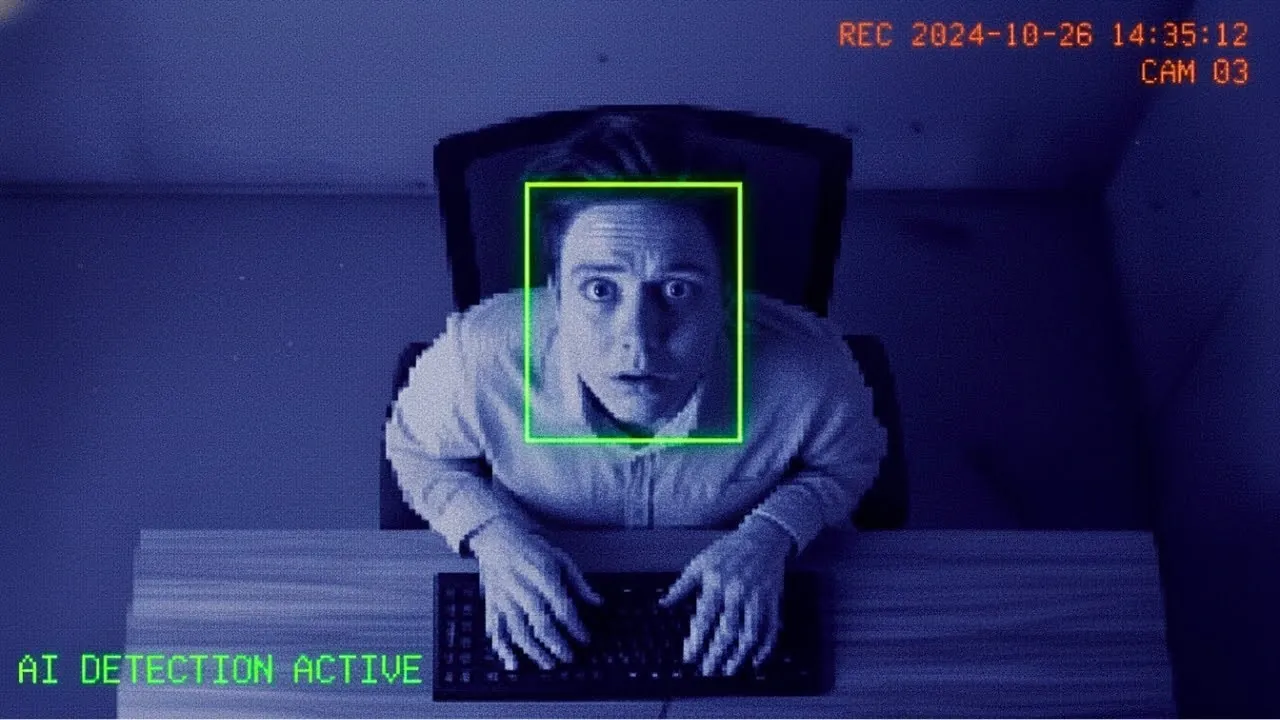

Imagine walking into your office in 2027, only to find that every click, keystroke, and glance is being tracked by an AI system more vigilant than any human supervisor could ever be. It’s not just your productivity that’s being monitored, your tone of voice in meetings, your facial expressions during video calls, and even the time you spend away from your desk are carefully logged. This isn’t the plot of a dystopian novel; it’s the potential future of work, where efficiency comes at the cost of privacy and the line between human and machine blurs uncomfortably. As AI-powered workplace monitoring systems grow more advanced, they promise unparalleled productivity but also raise unsettling questions about autonomy, trust, and the psychological toll of constant surveillance.

In this overview, All About AI explore the chilling realities of AI-driven workplaces and the ethical dilemmas they present. You’ll discover how innovative technologies like object detection models and voice synthesis tools are reshaping the employee experience, for better or worse. From the promise of real-time feedback to the psychological strain of perpetual judgment, this glimpse into the future will challenge you to consider what kind of workplace we’re building and whether the trade-offs are worth it. As we unpack the implications of these systems, one question looms large: can we harness the power of AI without losing sight of our humanity?

AI Workplace Monitoring Ethics

TL;DR Key Takeaways :

- AI-powered workplace monitoring systems track employees with precision, using technologies like YOLO and OpenCV, to measure active work time and productivity, often deducting pay for inactivity or absences.

- Real-time feedback mechanisms, including voice synthesis tools like Quen, provide immediate performance evaluations, which can enhance productivity but also lead to stress, reduced morale, and a sense of constant judgment.

- These systems extend beyond physical tracking, logging screen activity, keystrokes, and absences, creating a comprehensive surveillance environment that raises significant privacy and psychological concerns.

- Customizable monitoring features, such as tracking hand movements, facial expressions, and tone of voice, offer flexibility but amplify the potential for misuse and ethical violations in workplace environments.

- The balance between efficiency and privacy is a critical challenge, as these systems risk dehumanizing employees and fostering burnout, highlighting the need for ethical oversight to ensure AI serves as a tool for progress rather than oppression.

AI Monitoring: Tracking Every Move

At the heart of these systems lies a sophisticated AI framework designed to track employees with unparalleled precision. Using object detection models such as YOLO (You Only Look Once), the system identifies and monitors your physical presence within designated workspaces. Bounding boxes outline your position, making sure that every moment spent at your desk is accounted for. The AI calculates your earnings based on active work time, automatically deducting pay for absences or inactivity. This level of scrutiny transforms the workplace into a tightly controlled environment where every second is measured and monetized, leaving little room for flexibility or personal discretion.

Real-Time Feedback: Productivity Under Pressure

These systems extend beyond merely tracking your physical presence. They analyze your work performance in real time, monitoring on-screen activities such as coding, document editing, or other tasks. Advanced AI models, paired with voice synthesis tools like Quen, provide immediate feedback through synthesized voice prompts, offering corrections or suggestions as you work. While this feature has the potential to enhance productivity, the constant evaluation can lead to heightened stress, reduced morale, and a pervasive sense of being under surveillance. Employees may feel as though they are perpetually judged, creating an environment where creativity and innovation are stifled by the pressure to meet AI-driven standards.

POV: Your Future AI Workplace Nightmare 2027

Dive deeper into AI automation with other articles and guides we have written below.

- Master AI Automation : Create Smarter Workflows with n8n

- Beginner’s Guide to AI Automation with Make.com

- AI automation tools tested Magical vs Zapier

- How to Build AI Automation Agents Without Coding Skills

- AI Agents Explained: The Future of Automation Beginners Guide

- What is MCP? The AI Protocol Transforming Automation

- 9 Advanced AI Automation Tips & Techniques

- How to Use Zapier AI with ChatGPT, Claude, Gemini for Automation

- 7 AI Agent Automation Tools to Boost Productivity in 2025

- Master AI Automation with ChatGPT-o1 Series and RAG

Surveillance Features: Beyond the Surface

The scope of these systems goes far beyond simple observation. They log screenshots of your screen activity, monitor keystrokes, and record absences. For example, if you step away from your desk without logging out, the system automatically deducts pay for the time you are gone. Such invasive measures create a comprehensive surveillance mechanism that leaves little room for personal privacy. Over time, this level of oversight can foster a culture of fear and mistrust, eroding workplace relationships and diminishing employee satisfaction. The psychological toll of such constant monitoring cannot be understated, as employees may feel dehumanized and reduced to mere data points.

Technical Implementation: The Machinery Behind the Monitoring

The technology driving these systems is both advanced and efficient. Object detection models like YOLO ensure precise tracking of individuals, while OpenCV assists seamless video streaming for real-time monitoring. CUDA, a parallel computing platform, optimizes performance, making sure low latency even in high-demand environments. Voice synthesis tools like Quen provide dynamic, customizable feedback, allowing the system to interact with employees in a seemingly human-like manner. Together, these technologies create a powerful yet deeply intrusive monitoring infrastructure, capable of reshaping the workplace experience in profound ways.

Customizable Monitoring: Tailored Surveillance

One of the most striking features of these systems is their customizability. Employers can configure the AI to monitor additional parameters, such as hand movements, facial expressions, or even tone of voice during virtual meetings. This flexibility allows organizations to tailor the system to their specific needs, enhancing its utility in diverse work environments. However, this same adaptability amplifies the potential for misuse. With the ability to enforce extreme levels of control, these systems risk crossing ethical boundaries, transforming workplaces into environments of constant surveillance and micromanagement. The balance between customization and ethical responsibility becomes increasingly precarious as these technologies evolve.

Efficiency vs. Privacy: A Delicate Trade-Off

The tension between efficiency and privacy lies at the core of these systems. On one hand, tools like CUDA ensure high performance and minimal latency, allowing seamless monitoring that can optimize workflows. On the other hand, the invasive nature of constant surveillance raises serious ethical questions. Employees may feel dehumanized, reduced to data points in a system that prioritizes productivity over well-being. Over time, this could lead to burnout, decreased job satisfaction, and higher turnover rates, ultimately undermining the very efficiency these systems aim to achieve. The challenge lies in finding a balance that respects both organizational goals and individual rights.

Dystopian Implications: A Warning for the Future

The rise of AI-driven workplace monitoring serves as a stark reminder of the potential dangers of unchecked technological advancement. While these systems offer unparalleled efficiency and control, they also highlight the risks of prioritizing productivity at the expense of human dignity and autonomy. Constant surveillance and real-time feedback can create an oppressive atmosphere, eroding trust and collaboration among employees. Without ethical oversight, these technologies risk transforming workplaces into environments of fear and alienation, where employees feel more like cogs in a machine than valued contributors to a shared mission.

The Case for Ethical AI

As AI continues to reshape the future of work, it is crucial to balance innovation with ethics. Systems like these demonstrate the fantastic potential of AI, but they also underscore the risks of unchecked surveillance. Organizations must prioritize transparency, privacy, and employee well-being to harness the power of AI responsibly. The goal should be to create technologies that empower employees and enhance productivity without compromising their dignity or autonomy. By fostering a culture of ethical innovation, businesses can ensure that AI serves as a tool for progress rather than a source of oppression.

Media Credit: All About AI

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.